Historically, Portainer has been a pure "ClickOps" tool, due to its rich UI, however that is no longer the case.

As of Portainer CE 2.11 and Portainer Business 2.12, we now have comprehensive, built-in GitOps for Docker and Kubernetes. This functionality covers 80% of use cases where you need a GitOps solution that "just works". It's perfect if you don't have a solution already in place, and/or don't want to spend weeks or months learning something that should be invisible!

But how does Portainer's GitOps engine fit into your development pipeline?

Below is a pretty typical flow that most organizations aspire to, when looking to embrace a CI/CD pipeline incorporating multiple developers or teams.

Getting code into Production starts with developers, who physically write the code. Developers almost always develop locally, on their laptops, using IDEs of their choosing. More often than not, these same developers want to see their code changes running locally, to iterate before they commit their code back to the company repo for testing. To accommodate their need to run code locally, developers build their own scripts/tooling, likely leveraging VSCode, bash scripts, and Docker.

The Dev (or their DevOps teammate) would generally write a Dockerfile which is used by their scripts to build a container instance of their app, and then run that container (and any supporting services) inside their local docker instance. At this level, developers are running standalone Docker instances (likely Docker Desktop) on their machines, and are expected to know how to use Docker to inspect and triage the containers. Portainer can help developers get the visibility they need to triage their applications locally, seeing logs, performance, crash states/reasons etc.

Once developers are confident in their work, they commit their changes back to the centralized code repo which is where Continuous Integration (CI) toolsets normally kick into life.

A CI tool (like Azure DevOps, or GitHub Actions) will trigger the automated build of an image (using Dockerfiles created by the developers or DevOps team) and will push the image (plus any image artifacts) to a combination of an image registry, and a Git repo that holds the manifest for the application deployment. The CI tool then notifies either a CD tool that a deployment needs to take place or the CI tool can wait for a deployment to occur outside of its control (eg via GitOps tooling), before executing automated unit tests against the built environment. Once the automated tests have successfully been completed, the CI will typically notify the CD tool that the deployed environment is no longer needed and should be destroyed.

At the next stage, generally triggered by the automated tests, but sometimes by a human, the successfully tested branch is merged back into a release trunk/branch which initiates the second cycle of CI automation. Again, CI builds an image, the image and manifests are pushed to a repo, and then the CI notifies CD (or waits) for deployment to a staging environment to occur. Once the staging environment is running, automated integration tests are executed by the CI, and often these are supplemented with manual tests executed by a QA team. Once all integration tests are completed, the CI would typically notify the CD tool that the deployed environment can be destroyed. The CI tool then retags the built image with the production tags, and pushes the tags and manifests to a repo.

At the final stage, the CI either initiates the update of Production by notifying a CD tool to deploy the new production build, or exits if there is a GitOps tool polling for changes.

So, how does Portainer fit into the flow?

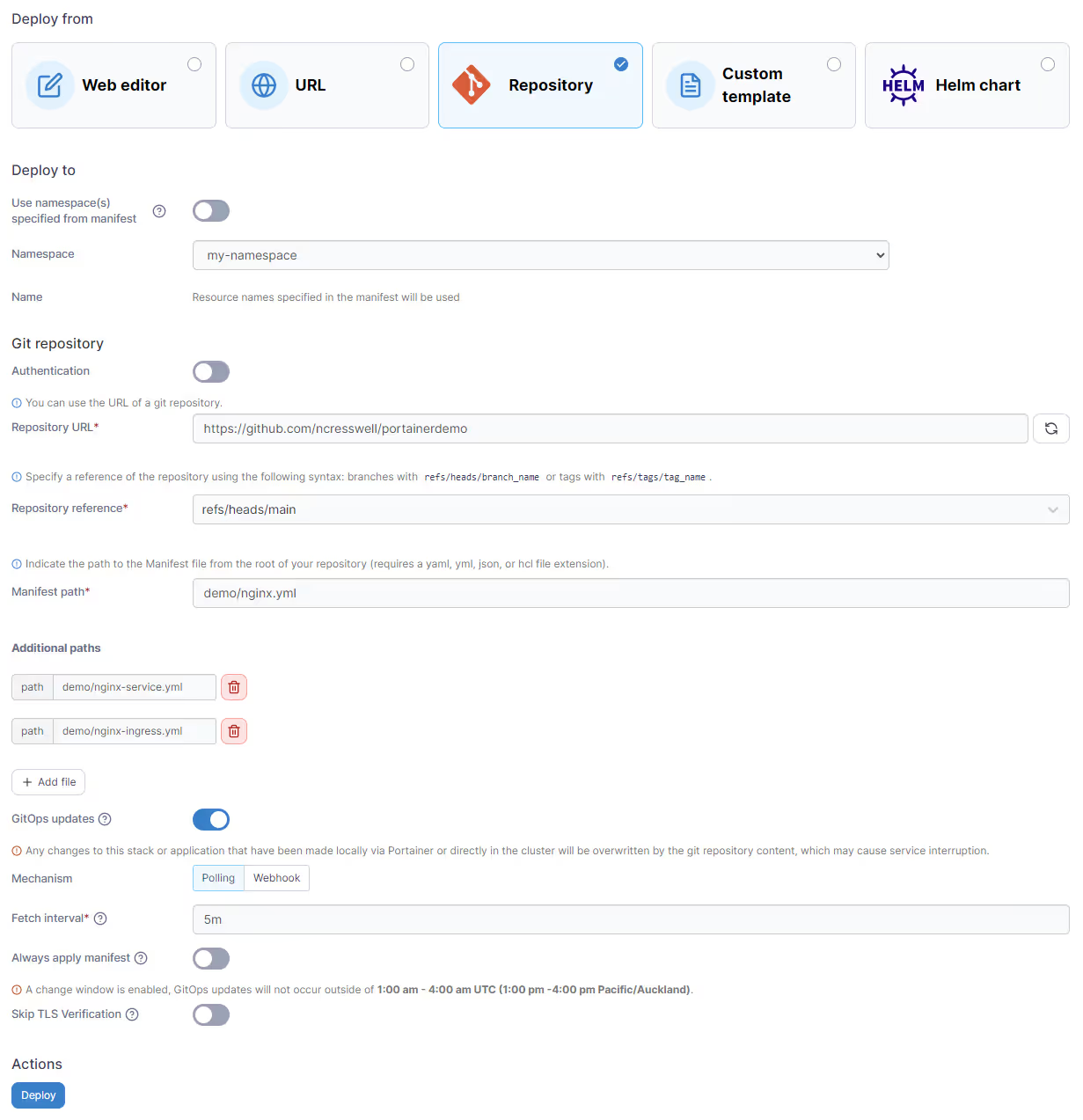

Portainer is not a CI tool, it is not even a CD tool (although, as our GitOps tooling can be configured to pull or be triggered by a webhook we can be thought of as a CD). Portainer is a GitOps tool. If you are not aware of the definition of GitOps, it means all deployment configuration manifests that describe the "desired state" are held in Git, automation is triggered by changes that occur in Git, and as a result, this dramatically reduces developer tool context switching as they can trigger deployment updates from within the tool they use every day.

From a CI perspective, you simply need to configure your pipeline to create images, push manifests, and then wait for Portainer to detect the changes, and deploy the environment based on the changes (at the very least, a manifest would change with a new image version tag, Portainer would detect that and update the running deployment with the new image tag).

But how is Portainer's GitOps engine different from others in the market? Simple, Portainer's capability exists for Docker, Docker Swarm, and Kubernetes, so if you run a hybrid environment, we are the obvious choice.

Secondly, Portainer runs the GitOps capability inside Portainer, not inside your clusters, so there is ZERO load on your clusters, and nothing to install or maintain. Portainer polls for changes, and then executes the changes against the clusters on your behalf, regardless of where they may be hosted or what version of Kubernetes they run.

Portainer Business has additional capabilities, such as an "enforce" mode, which overrides the running deployment with what's defined in Git, and also a "change window" option, which disables automation outside of a preset time window (which is brilliant if your apps are not fully capable of rolling updates, but you still want the benefit of GitOps).

Portainer Business Edition also adds support (on Docker Standalone and Docker Swarm environments) for storing your files in your Git repository alongside your stack YAML, and automatically deploying those files to your environments when you deploy the stack. We call this relative path support, and you can read more about how it works in our documentation.

Given our GitOps is native to Portainer, and it's so simple to use, give it a try, you will be surprised how easy it is to get started.

Try Portainer with 3 Nodes Free

If you're ready to get started with Portainer Business, 3 nodes free is a great place to begin. If you'd prefer to get in touch with us, we'd love to hear from you!

Neil